An ode to research software

Why research software is undervalued and how we can start appreciating it

At our office, there’s a running joke about whether you’re team R or team Python. At the moment it’s finely poised, perfectly split 50/50 between those who are R-tists and those who are Pythonistas. For the uninitiated, both are flexible open-source software used for a variety of functions, ranging from basic statistics to running artificial intelligence models. In our case, we use them for research purposes. It isn’t as if this is a two-horse race, either. There are hundreds of different software packages that are used in research, with some specifically designed for more niche fields (such as phylogenetics in my research, looking at the evolutionary history of organisms and viruses). Within this software, thousands of other packages may also be developed for specific purposes, such as packages to more efficiently conduct statistical analyses or visualize data. The volume of software is enormous.

The fact that research software can imbue such strong identities on people is both amusing (reminiscent of sports fans), but also very telling. It’s telling because of how ubiquitous the software is in our daily research lives, intertwining itself with our self-identity as researchers.

Your choice of software therefore also reveals something about you. It says something about your research area (e.g., medical researchers and biologists use R but would never consider Python, with the opposite being true for computer scientists) and about your approach to coding. The icons populating your desktop therefore constitute a (nerdy) form of social signaling.

Zooming out, these same cultural identities create communities of likeminded people that appreciate the value of software in their work; in turn, these communities are the life force behind developing and maintaining software.

This post is therefore an ode to research software, building on this appreciation for intangible lines of code. We’ll have a look at how ubiquitous research software really is and how we can broaden its appreciation beyond the communities that build and maintain them.

A major contemporary issue in research is that contributions to software are relatively undervalued. Of course, you can gain a significant amount of respect and appreciation from your peers, particularly if they use your software regularly in their work. There are also ways of racking up citations, which is achieved when software publishers share their new software through scientific articles, which might look something like this:

The good thing about publishing your software in academic journals, at least from an individual perspective, is that you get credit for it in the form of publications and citations. In my upcoming book, I refer to this as scientific capital. What I mean by scientific capital is that publications and citation counts (as well as associated metrics such as the H-index) work like an academic currency, which you can trade in for benefits, including promotions, fellowships, or being invited to join an exclusive academic society.

The effects of this scientific bargaining and currency collection in science more broadly are worth discussing separately, but the result is that contributors to research software will be undervalued relative to their ‘true’ contribution since these contributions aren’t measured as tangibly as publications, citations, or patents.

Understandably, this has led to calls for both researchers and funders to appreciate the value of research software, putting it on par with other research activities. Here are a few different quotes that sum up why software and packages are undervalued, and how important it is to appreciate them more:

Research software is essential for research and is being more strongly recognized globally by researchers. The National Science Foundation (NSF) [in the United States] identifies software as ‘directly responsible for increased scientific productivity and significant enhancement of researchers’ capabilities’. National and international policy changes are now needed to escalate this recognition and to increase the impact of the software and code in important research and policy areas.

While there are established norms in science for giving formal citation and credit to the authors of scholarly papers going back centuries, software—as many types of non-traditional research outputs —is often neglected or treated as a second-class type of output and eminently hard to cite.

A similar sentiment is echoed by Dario Taraborelli, a scientific program officer at the Chan Zuckerberg Initiative (CZI) (which we’ll return to a bit later):

If you look at the key breakthroughs, not just in biomedicine, but in science in the last decade, they have consistently been computational in nature [...] And scientific open-source software specifically has been at the core of these breakthroughs.

In fact, we probably underestimate how undervalued software really is. I mentioned a bit earlier that software doesn’t always make it into an academic article, such that those publishing the article don’t get scientific ‘credit’ for their work. But a large chunk of contributions to research software isn’t just creating the software, but also maintaining or updating it, which will tend to go unnoticed.

Researchers may also create code (or packages) within existing software, which will similarly not generate much attention, despite the fact that these packages might be extremely useful for researchers in accelerating their research.

Personally, I’ve recently been working on an article using a suite of different indices to describe the shape of phylogenetic trees (i.e., the evolutionary history of organisms). But instead of having to code the math behind the indices myself, the majority of them were already integrated into packages within R. I’m not exaggerating when I say that this might have saved me 100 hours of work. I didn’t just save time coding, but I also saved time by not having to find bugs in the code or confirming that I was getting the right results. Of course, I could send the developers an email thanking them for their work, which might provide some short-lasting happiness, but it probably won’t help them during their next round of funding.

Hopefully I’ve convinced you that research software is undervalued, and hence deserves this ode in the first place. Fortunately, several initiatives have recently been launched to change this by measuring how often research software is used in research. Let’s start with one funded by the CZI, which was the inspiration for this post in the first place.

In a recent pre-print, funded by the CZI, they developed an ingenious method to measure how often research software is mentioned in the biomedical literature. First, they created an enormous dataset of articles. The dataset was based on the entire repository of open-access articles in PubMed (a repository for biomedical research articles) totaling 3.8 million articles, as well as a collection of 16 million articles held by the CZI, obtained through their agreements with various publishers.

They then trained SciBERT, a model which can scan through scientific articles, to extract mentions of software in the text. Since the same software might be mentioned in a variety of ways, such as using the full name of the software or simply an acronym, they then clustered all the different mentions into distinct software groups. Finally, they “hand-checked the top 10,000 most frequently occurring mentions to remove inaccurate entries — for example, non-computational methods, names of operating systems, names of initiatives related but distinct from software, etc. incorrectly classified as software mentions.”

So how many mentions did they find?

From the PubMed collection, they noted over 1.5 million mentions of software and 934 thousand mentions from 3 million papers in the publishers’ collection. An enormous number of mentions!

(Note: not all articles mention software, and certain articles could have several mentions of software).

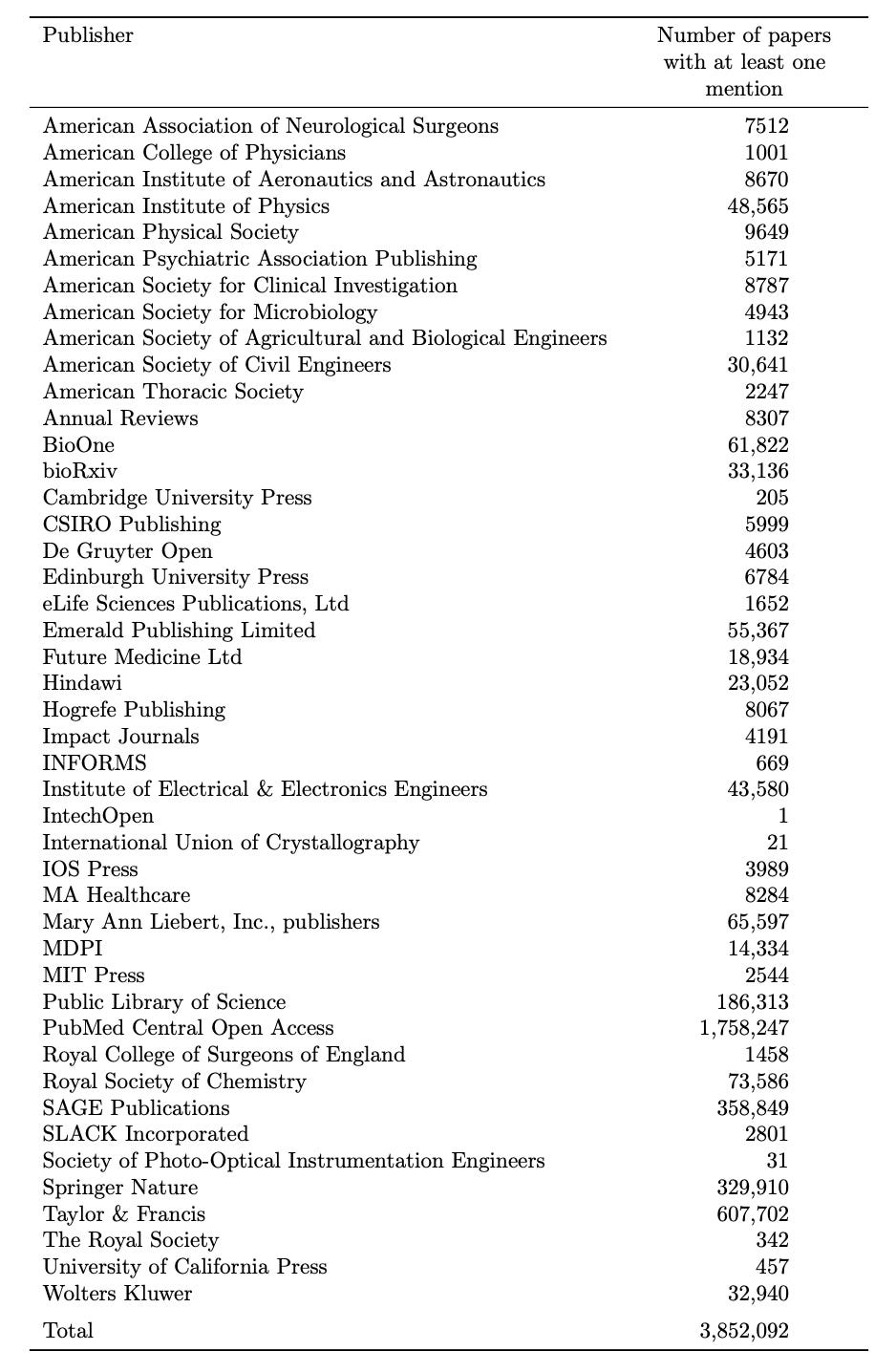

In the table below, you can see how many papers had at least one mention of a research software within their publisher collection:

Here’s another table from Nature, showing the five fastest growing tools in the CZ database:

The CZI dataset, however, isn’t the only piece of work in this field. In their announcement post, they also admit to being inspired by previous work, including:

SoftwareKG, a knowledge graph that contains information about software mentions from more than 50K scientific articles from the social sciences; SoMeSci, a curated collection of 3.7K software mentions in a collection of 1.3K PubMed Central articles; Softcite, a dataset of manual annotations of 5K academic PDFs in biomedicine and economics; and a release of 318K software mentions based on the CORD-19 dataset.

Hopefully, the aggregate effect of these initiatives is that we get a much better understanding of how ubiquitous research software is. By extension, this might drive a scientific culture change which appreciates software much more than is currently the case.

Conceivably, it could also contribute to research software being on equal (or closer) footing with standard research articles when funders/committees evaluate the scientific contributions of applicants. The idea would be to elevate research software as a unique scholarly output, resulting in greater credit being allocated to those working in the research software world.

The hope is also that this will lead to more funding for these types of projects. The money allocated to software could certainly be used to work on new software, but perhaps as importantly, allows for the maintenance of existing software.

Fortunately, there are some signs that things are changing:

Schmidt Futures, a science and technology-focused philanthropic organization founded by former Google chief executive Eric Schmidt and his wife Wendy, launched the Virtual Institute for Scientific Software (VISS), a network of centres across four universities in the United States and the United Kingdom. Each institution will hire around five or six engineers, says Stuart Feldman, Schmidt Futures’ chief scientist, with funding typically running for five years and being reviewed annually. Overall, Schmidt Futures is putting US$40 million into the project, making it among the largest philanthropic investments in this area.

Interestingly, funding could also be used to develop communities. As I mentioned in the introduction, software can imbue strong identities on individuals. This is both because researchers frequently use these tools but also because they become part of a larger network of scientists that also uses the same tools.

Funding the maintenance of these communities (through social events, conferences, or online forums) might therefore lead to researchers being more invested in software. This could increase the likelihood of people wanting to maintain or contribute to software themselves, such as through finding bugs in other people’s code or creating packages themselves. In case you’re interested in reading more about how to fund software, I’d highly recommend this article.

Lastly, a greater focus on software might also lead to the growth of research software engineering (RSE) (i.e., software engineers who help build software infrastructure, fix bugs, and maintain code) as a legitimate and valued career path. (Sidenote: the UK Society of Research Software Engineering and the US Research Software Engineer Association are two groups currently operating in this space).

Much like lab technicians, statisticians, and data managers are already well-integrated into research teams, research software engineers might also become ‘typical’ components of large-scale scientific teams.

Given the importance of research software in the progression of science, it’ll be fascinating to see whether they can establish themselves more formally as integral parts of the scientific enterprise. Until then, research software will certainly remain an untold health story.

That’s my ode to research software - thanks for reading! Feel free to share the article and subscribe (below!) to get another untold health story in next month’s newsletter.

Got any untold health stories I should write about? Or a story you’d like to pitch and write yourself? Write to me at markkhurana@gmail.com or catch me on twitter @markkhurana!